SDXL, also known as Stable Diffusion XL, is a highly anticipated open-source generative AI model that was just recently released to the public by StabilityAI. It is an upgrade from previous versions of SD like 1.5, 2.0, and 2.1, and offers significant improvements in image quality, aesthetics, and versatility. To view a full list of improvements and limitations, check out our post on this page.

In this guide, we will walk you through the process of setting up and installing SDXL v1.0, including downloading the necessary models and how to install them into your Stable Diffusion interface. This guide is tailored towards AUTOMATIC1111 and Invoke AI users, but ComfyUI is also a great choice for SDXL, we’ve published an installation guide for ComfyUI, too! Let’s get started:

Step 1: Downloading the SDXL v1.0 Model Files

Provided you have AUTOMATIC1111 or Invoke AI installed and updated to the latest versions, the first step is to download the required model files for SDXL 1.0. This includes the base model, LORA, and the refiner model. You can find the download links for these files below:

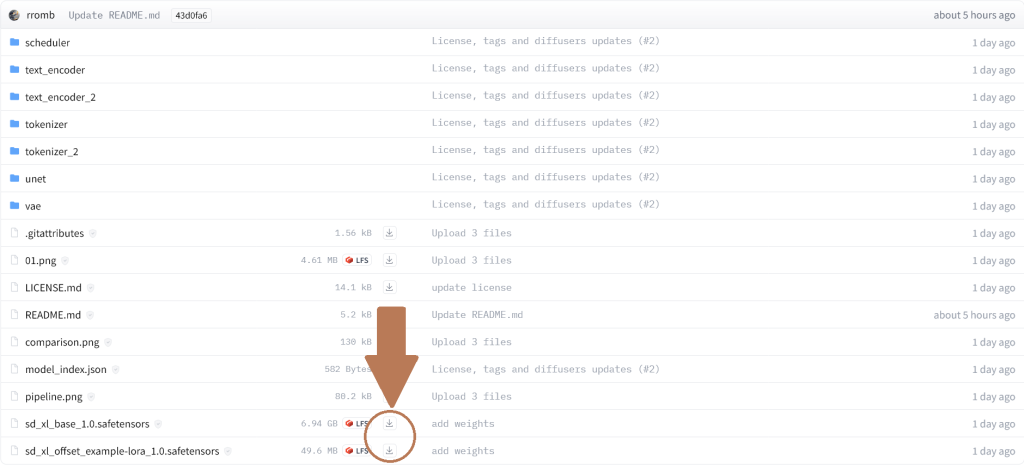

- SDXL 1.0 base model & LORA: https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0 – Head over to the model card page, and navigate to the “Files and versions” tab, here you’ll want to download both of the .safetensors files.

- Refiner model: https://huggingface.co/stabilityai/stable-diffusion-xl-refiner-1.0 – Same as before, only here you’ll need to download the “sd_xl_refiner_1.0.safetensors” file. Please note that these files are fairly large (6GB) so they might take a while to download depending on your internet speed!

Step 2: Move the Model Files to Your Models Folder

Once you’ve downloaded all three of the required files, you’ll need to place them into the correct folder. For the base model file and the refiner model, these will need to be placed into your Stable Diffusion models folder.

AUTOMATIC1111:

/stable-diffusion-webui/models/Stable-diffusion – Place the two files where you see the “Put Stable Diffusion checkpoints here” text file.

Invoke AI:

/invoke-ai/models/sdxl/main – Place the base model here.

/invoke-ai/models/sdxl-refiner/main – Place the refiner model here.

Step 3: Installing the LORA

The LORA file should be placed in the following folder:

AUTOMATIC1111:

/stable-diffusion-webui/models/Lora

Invoke AI:

/invoke-ai/models/sdxl/lora

Step 4: Loading the SDXL 1.0 Model

Now you’ve got the model files downloaded and in the correct folders, you should be good to go! Start by loading up your Stable Diffusion interface (for AUTOMATIC1111, this is “user-web-ui.bat”).

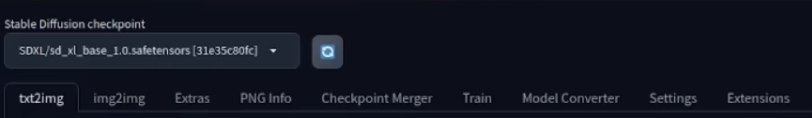

On the checkpoint tab in the top-left, select the new “sd_xl_base” checkpoint/model. It might take a few minutes to load the model fully. Similarly, with Invoke AI, you just select the new sdxl model.

It’s worth mentioning that previous extensions may not work with SDXL 1.0 since it is a newer model and works differently to previous models. If you run into any issues, we’d recommend disabling all your extensions from the extensions tab and restarting the web UI. Some users have also ran into problems when using the “xformers” optimization command line, so you might want to disable that, too. You can do this by editing your “user-webui.bat” file and removing the “–xformers” from the command line section.

Step 5: Recommended Settings for SDXL

SDXL now works best with 1024 x 1024 resolutions. However, you can still change the aspect ratio of your images.

Here are the image sizes that are used in DreamStudio, Stability AI’s official image generator:

- 21:9 – 1536 x 640

- 16:9 – 1344 x 768

- 3:2 – 1216 x 832

- 5:4 – 1152 x 896

- 1:1 – 1024 x 1024

Here is the full list of supported resolutions, as provided in the official SDXL documentation by Stability AI:

640 x 1536

768 x 1344

832 x 1216

896 x 1152

1024 x 1024

1152 x 896

1216 x 832

1344 x 768

1536 x 640Stability recommends that you keep the total number of pixels within 1024 x 1024 for best results. For sampling steps and sampling method, these are largely user preference and will also depend on what type of images you’re creating. As a general baseline, we’d recommend sticking with Euler A, but if you’re generating artistic images like paintings and drawings, DDIM might give you better results. You should always experiment with these settings and try out your prompts with different sampler settings!

Step 6: Using the SDXL Refiner

The refiner model works, as the name suggests, a method of refining your images for better quality. Note that for Invoke AI this step may not be required, as it’s supposed to do the whole process in a single image generation. To use the refiner model:

- Navigate to the image-to-image tab within AUTOMATIC1111 or Invoke AI.

- Change the checkpoint/model to sd_xl_refiner (or sdxl-refiner in Invoke AI).

- You can use any image that you’ve generated with the SDXL base model as the input image.

- Set the denoising strength anywhere from 0.25 to 0.6 – the results will vary depending on your image so you should experiment with this option.

Tips for Using SDXL

- Minimalistic Prompting: SDXL is much better at reading your prompts now, so try not to overcomplicate them too much! Compared to SD 1.5 and 2.1, you should get much better results when using simple prompts describing a single scene or subject. You can still use style prompts, like image and artistic styles, but these are better left at the end of your prompt.

- Negative Prompts: Negative prompts aren’t as necessary as they were in previous models. Certain negative prompts that you may have used before (such as “blur”, “blurry”, “unrealistic”, etc) may no longer be required and might actually make your image worse. You should still experiment with negative prompts, but don’t go overboard.

- Experiment and Iterate: Generating high-quality images with AI models often requires experimentation and fine-tuning. Don’t be afraid to try different prompts, settings, and model configurations to achieve different results. We recommend increasing your batch size so that you can get at least 2 images from each prompt.

- Stay Updated: As SDXL and SD user interfaces develop, you should try to stay updated with the latest releases and improvements. Check for official documentation and community forums like the /r/StableDiffusion subreddit for valuable insights and tips from other users.

- Look Out for Custom Models: Where SDXL is still new, custom models aren’t that common just yet. This will definitely change soon, and you can check resources such as Civitai for some of the best SDXL models to use. Using custom models often gives you better image results, as they cater to specific styles and have been trained even more than the base model.

Hopefully this guide has been helpful in getting you set up and using the new SDXL model. It’s still early days, but the new SDXL 1.0 model is looking like a huge upgrade to previous models. We can only expect it to get better as more custom models are made available!