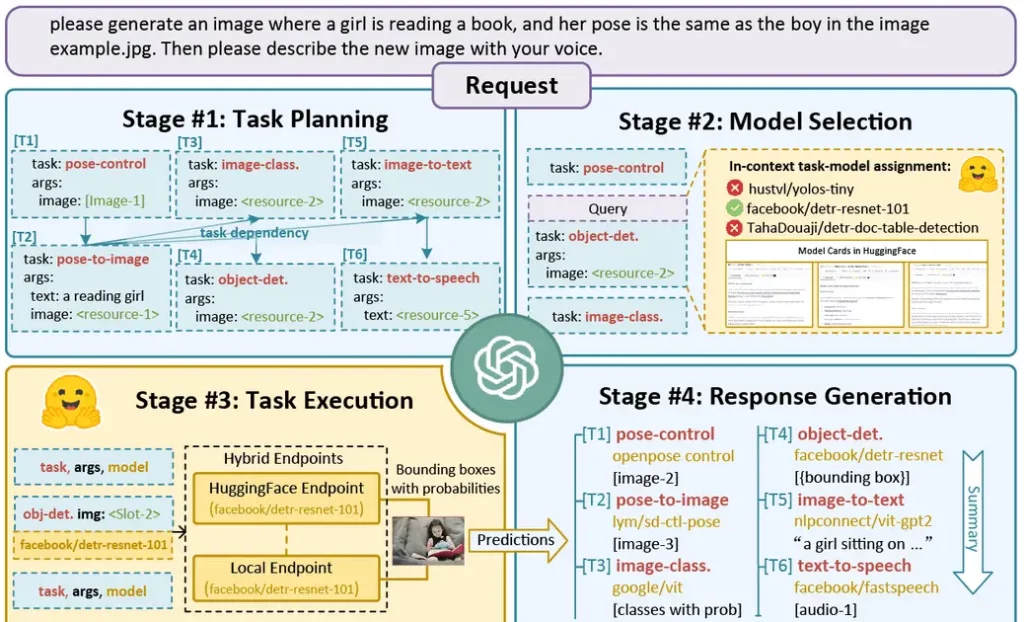

HuggingGPT uses large language models (LLMs) like ChatGPT to manage and connect various AI models from Hugging Face to solve complicated AI tasks in areas that would otherwise be impossible with just text-to-text. The tool follows a four-stage workflow, which includes task planning, model selection, task execution, and response generation. ChatGPT is used to understand user requests and select expert models hosted on Hugging Face based on their descriptions. The selected models are then executed, and the results are integrated by ChatGPT to generate a response. HuggingGPT is still in early development, but you can view the GitHub repository here: https://github.com/microsoft/JARVIS